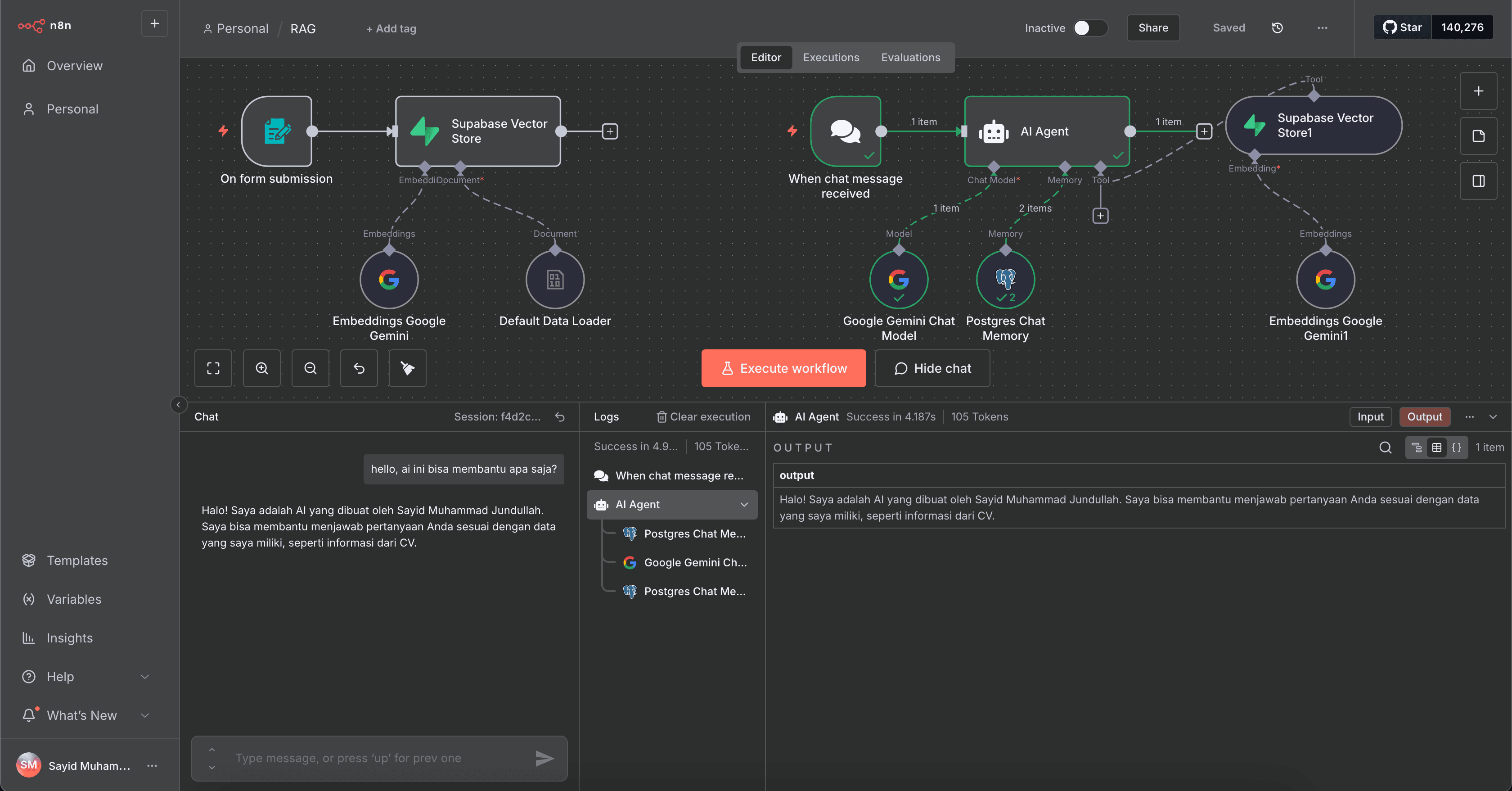

Personalized AI Assistant with N8N, Gemini, and RAG.

This project is an intelligent chatbot built using the automation platform N8N. The chatbot has Retrieval-Augmented Generation (RAG) capability, enabling it to answer questions based on personal information stored in a database, not just the general knowledge of the language model.

This system leverages the power of the Google Gemini API (the embedding-001 model for semantic understanding and gemini-1.5-flash for response generation) and PostgreSQL as a reliable storage center. Its main features include:

- - Personal Knowledge Management: Upload your PDF documents (such as CV, portfolio, notes) and the system will automatically process them, split them into chunks, convert them into vector embeddings using Gemini, and store them in PostgreSQL.

- - Contextual Intelligent Conversation: When a user asks a question, the chatbot will search for the most relevant information from your personal knowledge database and generate accurate and contextual answers using the Gemini model.

- - Conversation Memory (Chat History): The entire conversation history is stored structured in PostgreSQL, allowing the chatbot to remember questions and context from previous interactions, making the conversation feel more natural and continuous.

- - End-to-End Automation with N8N: N8N is used as an orchestrator that connects all components—from webhook endpoints, file processing, database interaction, to calling the Gemini API—efficiently without the need for complex code.

Core Workflow:

- Ingestion: The user uploads a PDF via the N8N interface. N8N processes the PDF, splits the text, and calls the Gemini Embedding API.

- Storage: The text along with its vector embedding is stored as a row in the

documentstable in PostgreSQL. - Retrieval: When a chat message arrives, the user's question is also embedded. PostgreSQL performs a similarity search to find the most relevant text chunks.

- Generation: The relevant text chunks are sent along with the prompt and chat history to the Gemini

gemini-1.5-flashmodel to generate the final answer. - Memory Storage: The question and answer are then stored in the

chat_historytable in PostgreSQL.

Project Details

Live SiteNot Available

Source CodeNot Available

Tech Stack

N8NSupabaseGoogle GeminiPostgreSQL